When ChatGPT generates a response, it does more than pull a few familiar blue links.

It expands queries, retrieves pages from across the web, and cites only a small fraction of what it finds. For brands trying to understand why they appear in some answers and disappear in others, that process is still largely a black box.

That lack of visibility creates a real challenge. A brand may have strong content, solid rankings, and broad topic coverage, yet still have no clear view into how ChatGPT actually discovers sources, how often it relies on follow-up searches, or how search visibility turns into citation visibility.

Take a commercial query like “What should I look for in VDR vendors?” ChatGPT searched both the original phrase and a longer follow-up query around features, security, pricing, and support. Here, a brand with a domain authority of 43 earned the citation, consistent with the broader pattern that pages can gain visibility when they cover both the main query and the supporting topics ChatGPT branches into.

This shifts what brands should prioritize next: understanding where citation drops off after retrieval, tracking the fan-out queries that expand the search path, strengthening coverage around the supporting topics those queries reveal, and focusing refreshes on pages already within reach of stronger visibility in Google.

With AirOps, teams can surface the fan-out queries ChatGPT generates, helping brands understand the broader search paths behind a prompt and identify content opportunities beyond the original query.

Those practical implications come from a broader set of patterns we examined across retrieval, fan-out behavior, and citation selection. To understand that process, our research focused on three questions:

- How does ChatGPT generate fan-out queries and expand the search path around a single prompt?

- How do Google rankings shape retrieval and citation across original and fan-out queries?

- How do cited pages differ from the much larger pool of retrieved but not cited pages?

Together, these patterns create a clearer view of how ChatGPT moves from search to citation, giving marketers a stronger foundation for deciding what content to create, what to refresh, and where visibility opportunities are still being missed.

Methodology

This study examines how ChatGPT researches, retrieves, and cites sources during answer generation.

We began with 15,000 original queries. During answer generation, ChatGPT retrieved 548,534 pages and generated internal follow-up searches (fan-out queries), which expanded the total query set to 43,233.

To understand how query intent shaped retrieval and fan-out behavior, we organized the 15,000 original queries into 8 evenly sized intent types, with 1,875 queries per type, grouped under two broader categories.

Informational intent:

- Definition: what something is or means.

- Evaluation: the benefits or merits of an approach.

- Exploration: when or why to use something.

- How-to: step-by-step procedural guidance.

Commercial intent:

- Awareness: what solutions exist for a problem.

- Comparison: head-to-head product comparisons.

- Research: best or top options within a category.

- Validation: specific product details such as pricing, features, or compatibility.

From each ChatGPT response, we extracted three outputs:

- The URLs explicitly cited in the final answer.

- The URLs ChatGPT retrieved but did not cite.

- The internal follow-up queries ChatGPT generated while researching the original prompt.

We then mapped both cited and retrieved-but-not-cited URLs against Google’s top 20 results for each original query and each fan-out query. This helped us understand how ChatGPT expands prompts through fan-out, what those follow-up searches reveal about how it researches a question, and how Google rankings relate to both retrieval and citation.

85% of Sources ChatGPT Retrieves Are Never Cited

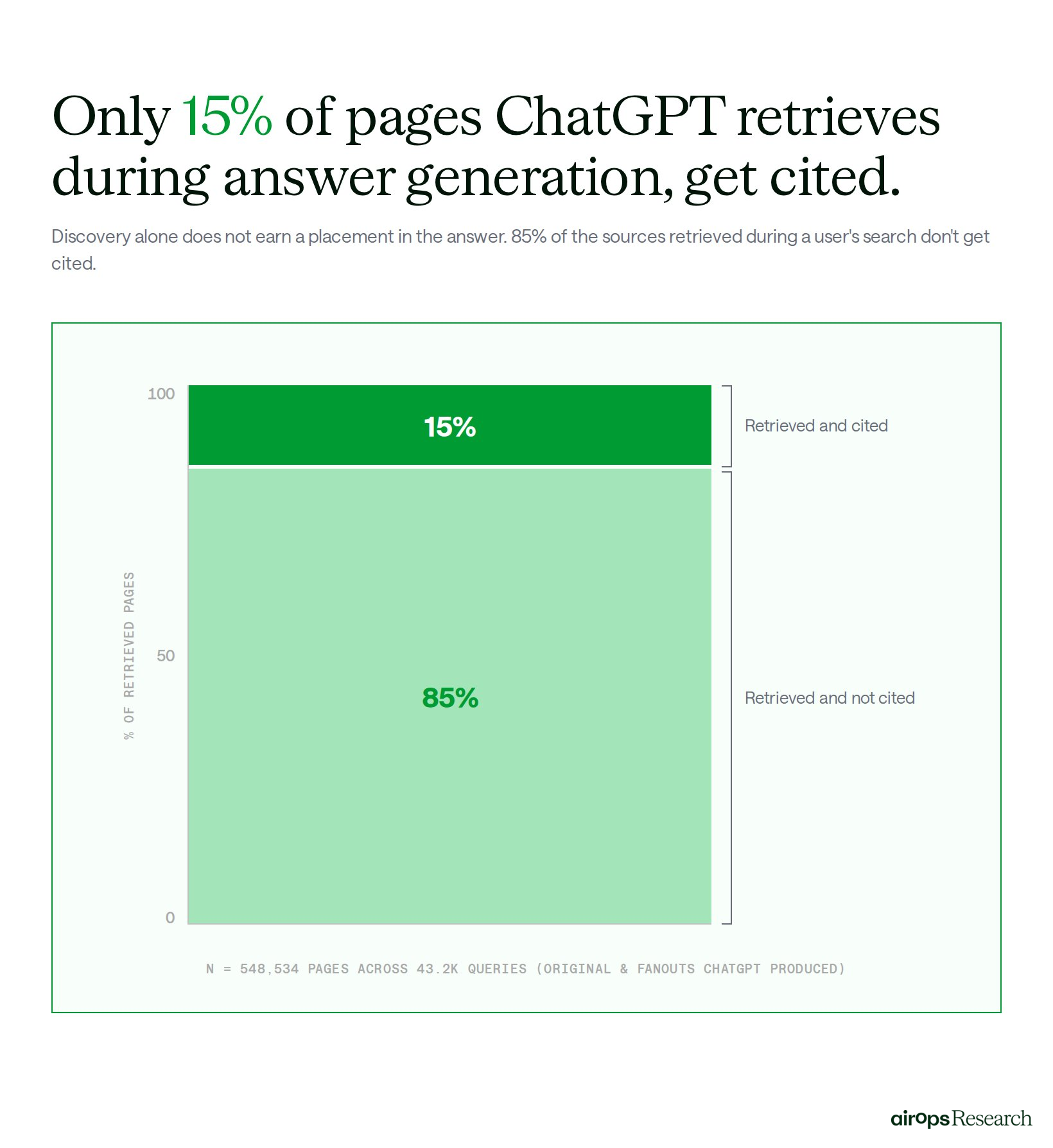

ChatGPT retrieves far more pages than it ultimately cites, which means being found is only the opening stage of the process.

Across the 548,534 pages ChatGPT retrieved during answer generation, only 15% were cited in the final response.

For brands, that changes the visibility challenge: retrieval creates the opportunity to be considered, but citation depends on whether a page is selected over the many other sources ChatGPT surfaced for the same answer.

That drop-off was not consistent across every type of search query.

When we segmented the dataset by query type, the share of retrieved pages that ultimately earned a citation varied meaningfully across categories.

Product-discovery and how-to queries produced the highest citation rates at 18.3% and 16.9%, while validation and comparison queries were lower at 11.3% and 13.1%. At a directional level, that suggests brands should not expect the same citation dynamics across every type of search. Some query types appear to give pages a stronger chance of carrying through from retrieval to citation than others.

The pages that did make it into the final answer also shared several patterns.

Pages with stronger title-query alignment and clearer language were more likely to earn citations, and sites in the middle of the domain authority (DA 40-80) curve were cited more often than those at the top.

The pages that had a 50% or greater title-query overlap saw a 20.1% citation rate, compared with 9.3% for pages with less than 10% overlap, a 2.2 times lift. Readability followed a similar pattern, with Flesch Reading Ease scores of 50 or higher more common among the pages ChatGPT chose to cite.

The takeaway is that retrieval alone does not translate into citation visibility.

For brands, the more useful benchmark is not just whether a page enters ChatGPT’s retrieval set, but where citation drops off after discovery. That gap helps identify which query types are harder to win, which retrieved pages are not strong enough to be cited, and where changes to alignment, clarity, or page focus may improve the odds of selection.

Pages Ranking #1 in Google Were Cited 3.5x More Often Than Pages Outside the Top 20 SERPs

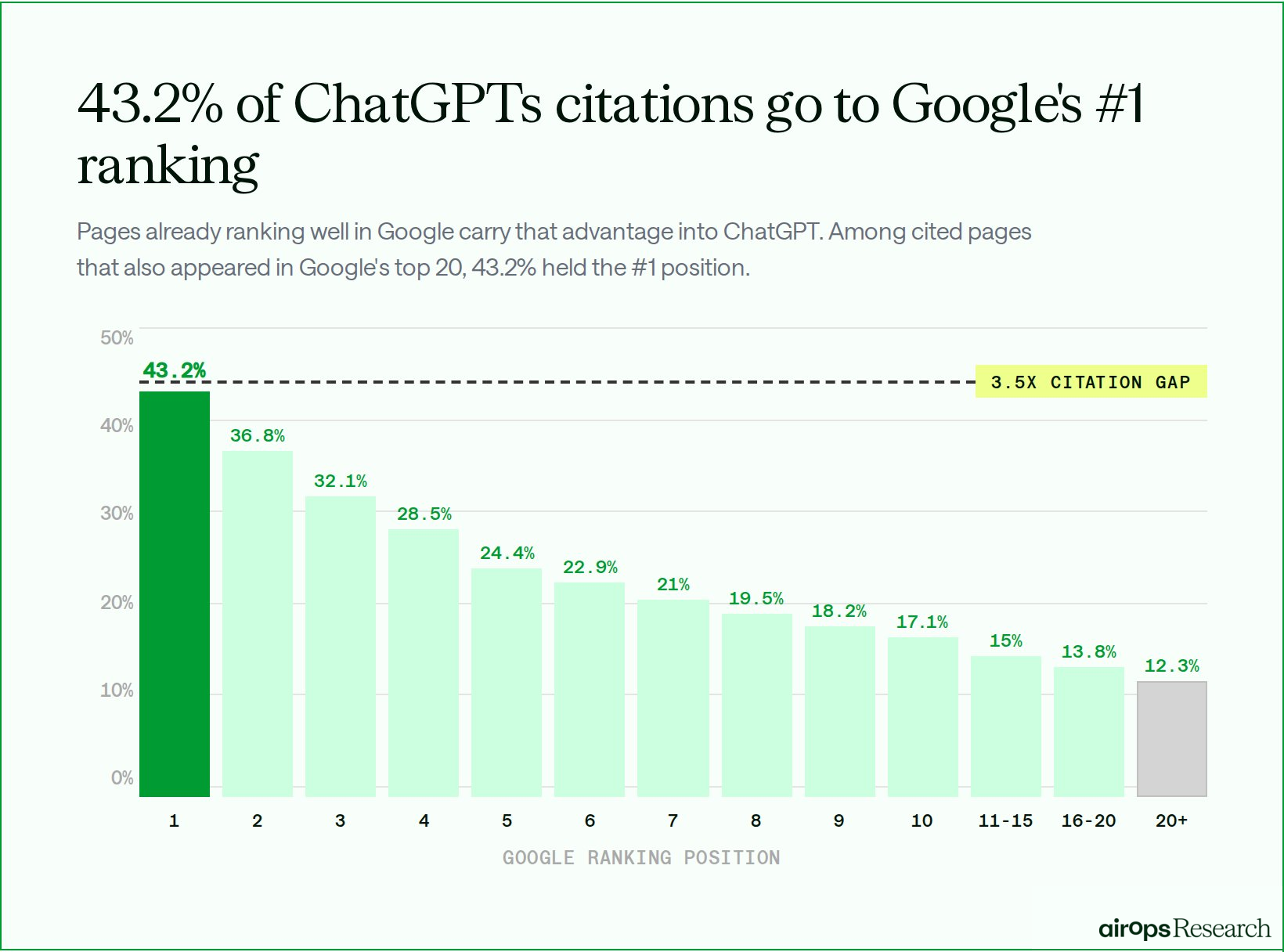

Strong Google rankings still carry a measurable citation advantage in ChatGPT.

When we mapped cited pages against Google’s top 20 results across all 43,233 original and fan-out queries, we found that 55.8% of all cited pages ranked in the top 20 for at least one query.

More importantly, the citation advantage was strongest at the very top of the SERP.

Among pages ranking #1 in Google, 43.2% were cited by ChatGPT. That was 3.5 times higher than the citation rate for pages ranking beyond Google’s top 20 results.

For brands, this makes ranking strength a competitive advantage, not just a traffic metric.

Pages already performing at the top of Google do not simply enter ChatGPT’s retrieval set more often. They also enter the citation process with a much stronger likelihood of being selected.

That shifts the practical priority from broad visibility to position strength.

The goal is not only to appear somewhere in the top 20, but to move high-value pages closer to the top of the SERP, where the citation advantage is strongest. Brands should audit pages already ranking in positions 10 through 20 for high-intent queries, look for low-hanging opportunities to improve depth and relevance, and expand coverage around the adjacent questions ChatGPT is likely to evaluate.

Fan-out Queries Create a Second Citation Surface Most Brands Haven’t Tapped Into

Most brands track rankings for their primary target keywords. A substantial share of citation opportunity sits outside that narrow view.

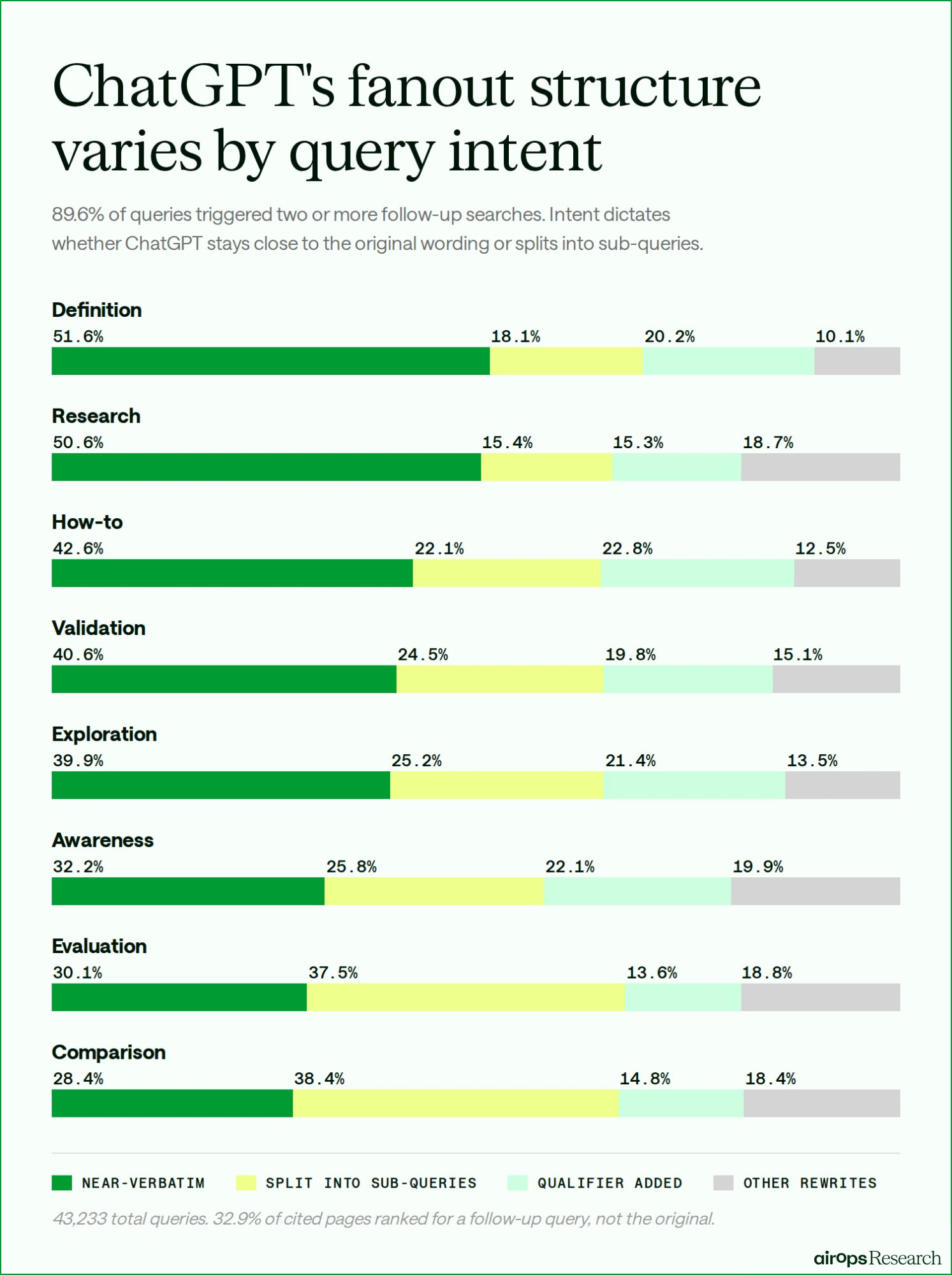

Across the 15,000 original prompts in this study, ChatGPT generated two or more fan-out queries on 89.6% of searches. These internal follow-up searches expanded the total query set to 43,233 original and fan-out queries, materially widening the search surface ChatGPT used during answer generation.

This matters because 32.9% of cited pages that appeared in any top-20 SERP were discovered only through fan-out.

That means tracking the original keyword alone is not enough to understand where citation visibility is actually won. Fan-out shows the follow-up searches ChatGPT uses to build answers, helping brands identify coverage gaps, prioritize refreshes, and see which follow-up searches influence citation.

The Structure of Fan-out Queries

Across informational queries, ChatGPT often stayed relatively close to the wording of the original prompt. 39.9% of informational fan-out queries remained near-verbatim, with the most common adjustments adding qualifiers such as “explained,” “guide,” or “tutorial.”

- Definition queries stayed near-verbatim 51.6% of the time, the highest rate of any query type.

- How-to queries stayed near-verbatim 42.6% of the time. When they were rewritten, the most common pattern was adding category or use-case context, such as a specific tool or scenario.

- Evaluation queries behaved differently. ChatGPT split them into sub-queries 37.5% of the time, often branching into follow-up searches around benefits, limitations, or use cases.

Across commercial queries, the fan-out pattern shifted more clearly toward decomposition. Instead of lightly rewriting the original search, ChatGPT more often broke the question into component-level follow-up searches, including alternatives, comparisons, pricing, and feature-specific terms.

- Awareness queries showed no single dominant rewrite pattern. A query such as: "What email marketing platforms are available for ecommerce" might get searched near-verbatim, expanded into "ecommerce email automation software," or reframed as a comparison.

- Research queries stayed near-verbatim 50.6% of the time, but were also the only type where year modifiers appeared at meaningful volume, at 9.7%.

- Comparison queries split into sub-queries 38.4% of the time, the highest of any type. A query like: "HubSpot vs Salesforce" became separate searches for pricing “Hubspot pricing vs Salesforce”, features, or reviews rather than a single head-to-head search.

- Validation queries stayed near-verbatim 40.6% of the time, the highest of any commercial query type.

Fan-out changes the content planning problem: the goal is not only to rank for the original query, but to cover the follow-up searches ChatGPT generates while building an answer.

For teams, that means three things in practice:

- Track fan-out coverage, not just primary keywords. A meaningful share of citation visibility comes from follow-up searches, not the starting query.

- Match content format to intent. Informational queries reward depth on the core topic, while commercial queries reward modular coverage across pricing, features, alternatives, and comparisons.

- Use refreshes to expand fan-out coverage. Strengthening existing pages around adjacent questions can open new citation paths without creating entirely new content.

~74% of Citations Go to Sites With DA Under 80

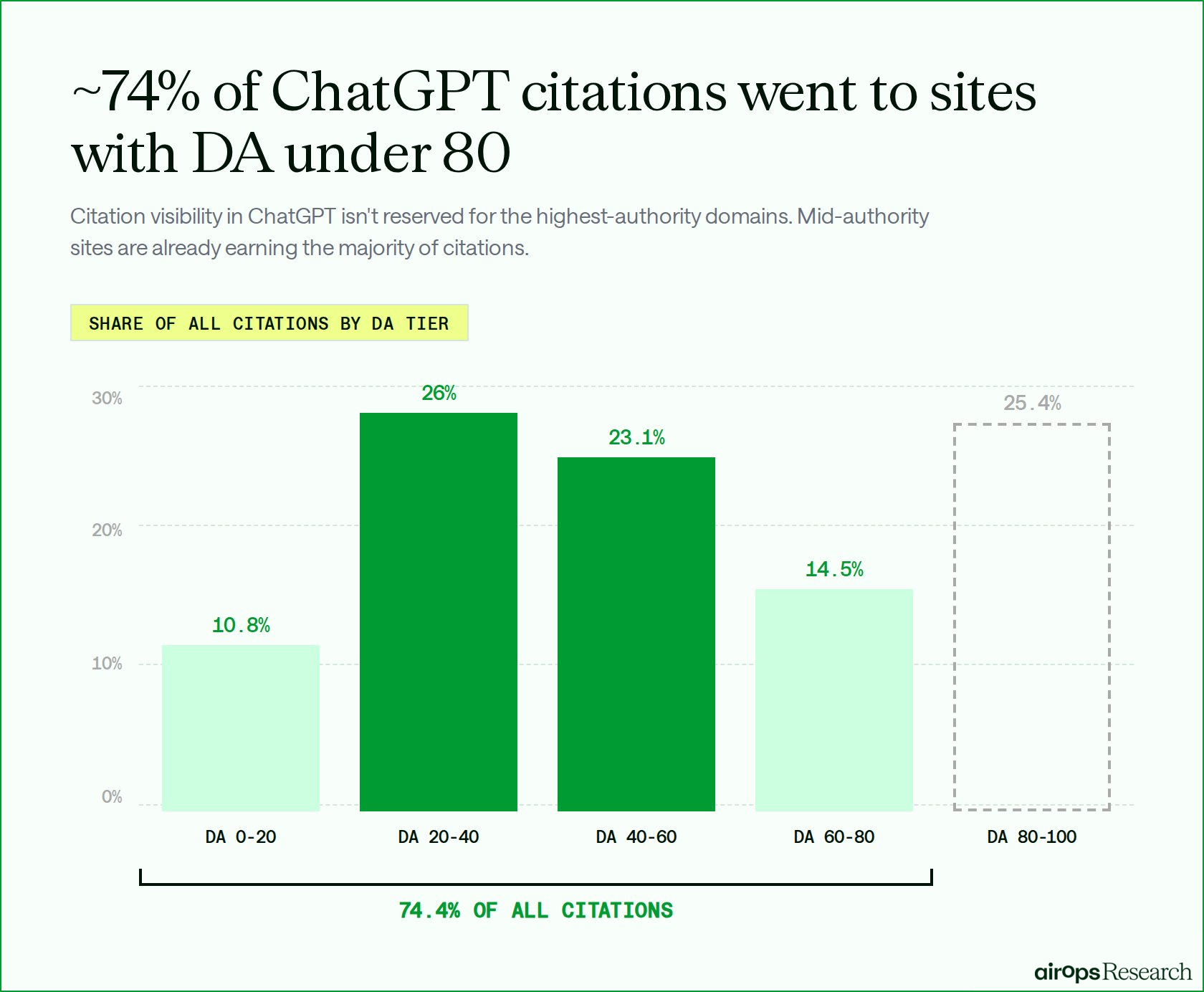

High domain authority is not a prerequisite for earning citations in ChatGPT.

Across the 82,108 citations in our dataset, nearly three-quarters went to sites with domain authority under 80. The majority of citations did not go to the highest authority domains, but to sites in the broad middle of the authority curve.

Sites with DA between 20 and 80 accounted for 63.6% of all citations. The DA 20-40 tier alone contributed a larger share than DA 80-100 sites, at 26.0% vs 25.4%.

When we looked at how often ChatGPT actually cited a page after retrieving it, the pattern was consistent across most of the authority curve.

From DA 0 through DA 80, between 21.5% and 23.6% of retrieved pages earned a citation. The only tier that underperformed was DA 80–100 at 15.0%, despite appearing in ChatGPT's retrieval set more frequently than any other tier. High-authority sites were retrieved often but cited at a lower rate than every other tier.

Earning citations in ChatGPT is more open than many brands assume.

Citation visibility is not limited to the most authoritative domains. Mid-authority (DA 40-80) sites are already earning a meaningful share of citations, especially in the query spaces where they already have relevance and search visibility.

For teams, that changes the competitive frame. The goal is not to beat the largest publishers everywhere, but to compete where ChatGPT is actually looking.

Brands can do that by building enough authority, query alignment, and topic coverage to show up in the retrieval environments that shape citation selection. The clearest opportunities often sit in longer-tail topics, where brands can create and refresh content that covers the supporting questions and subtopics ChatGPT explores while building an answer.

Strong Search Visibility Still Influences Citation Likelihood

Visibility in ChatGPT still begins with search visibility, but it no longer ends at the original query.

Pages that rank well in Google enter the citation process with a clear advantage, yet ChatGPT also expands beyond that starting point through fan-out searches that create additional paths to discovery.

For brands, that shifts how visibility in answer engines should be understood. Strong rankings still matter, but rankings alone do not capture the full search surface ChatGPT uses when building a response.

The clearest opportunities now sit in long-tail content creation and content refresh. Long-tail content helps brands expand into the follow-up queries ChatGPT generates, while content refresh helps stronger existing pages carry more of their search visibility into ChatGPT citations.

Ready to see where your brand stands?

Book a strategy session to learn how AirOps helps brands measure, grow, and win AI search.