When ChatGPT generates a response, it pulls in pages from across the web, evaluates them, and cites only a small share of what it sees. For brands trying to understand why one page gets cited and another does not, that next step is much harder to see.

Our earlier report on ChatGPT's retrieval and fan-out behavior found that only 15% of the pages ChatGPT retrieves earn a citation in the response. This report answers the next question: once a page makes it into the retrieval pool, what on-page signals determine whether it earns the citation?

For commercial queries, the answer changes based on where the user sits in the buying journey. A user exploring what solutions exist needs different content than a user comparing two named products or confirming a specific price. The pages that earn citations reflect that difference in structure, format, and level of detail.

Those practical implications come from a broader set of patterns we examined across cited and retrieved but not cited pages. To identify what helps content earn the citation in commercial search, our research set out to understand:

- Which on-page signals most consistently separate cited pages from retrieved-but-not-cited pages in commercial search?

- Which signals remain consistent across commercial query types, and which change depending on the stage of the buying journey?

- What do those patterns reveal about the kinds of page structure ChatGPT treats as more citation-worthy in commercial search?

Together, these patterns help clarify what happens after retrieval and what kinds of commercial page structure are more likely to earn the citation.

Methodology

This report focuses exclusively on commercial intent, drawing from the commercial subset from the base dataset used in our earlier research on ChatGPT’s retrieval and fan-out behavior. This subset includes 7,500 commercial queries in total, and the 217,508 pages ChatGPT retrieved during answer generation.

To understand how citation patterns changed by query type, we divided the main prompts into four evenly sized stages of the buying journey, with 1,875 queries in each of the following groups:

- Awareness: what solutions exist for a problem.

- Research: best or top options within a category.

- Comparison: head-to-head product comparisons.

- Validation: specific product details such as pricing, features, or compatibility.

For each retrieved page, we extracted a set of 25 on-page content signals tied to structure, readability, formatting, and page organization. These included features such as heading alignment and count, sentence length, section length, word count, lists, tables, images, internal links, external links, statistics and it’s type, and quotes.

We compared cited pages against retrieved-but-not-cited pages using logistic regression, random forest models, sensitivity-model variants, and domain-authority-stratified modeling to confirm which signals held after controlling for overlap between variables.

How the Buying Journey Influences What Wins in Commercial Search

Commercial pages that earn citations help both the user and the model move through a decision with less effort. But the structure that works changes as the user moves from understanding the category, to narrowing options, to comparing products, to validating a final detail.

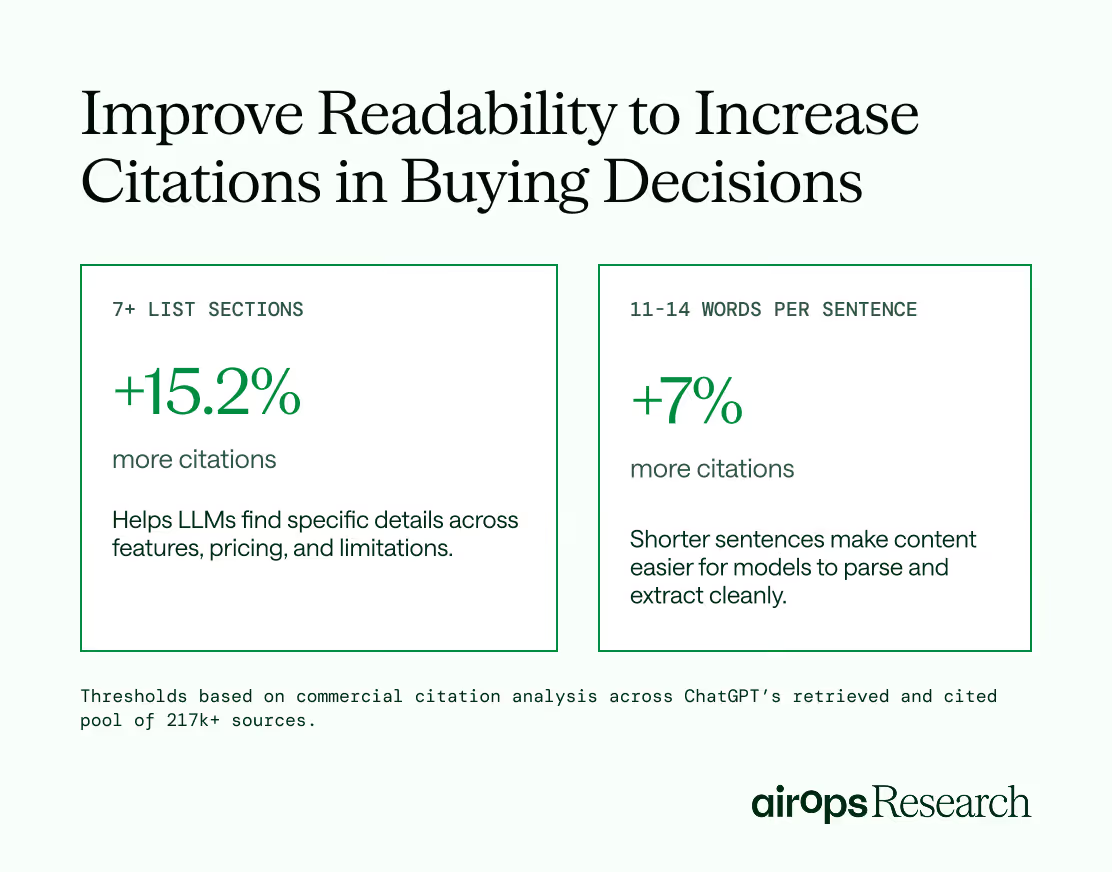

Across every stage of that journey, two patterns held consistently. Lists remained the strongest shared signal, and content that was easier to read, parse, and extract also performed better.

In commercial search, pages with 7 to 26 list sections were 6% to 15.2% more likely to earn a citation.

Pages averaging 11 to 14 words per sentence also had a roughly 7% higher likelihood of being cited, suggesting that commercial content performs better when information is presented in short, digestible units.

That pattern fits how LLMs process commercial pages across the buying journey.

At each stage, users are trying to answer a different kind of question, whether they are exploring options, narrowing choices, comparing products, or validating a final detail. Lists help organize the details that matter to that decision, while shorter sentences make the surrounding content easier for the model to read, parse, and extract cleanly.

But those shared patterns are only the starting point. The pages that earn the most citations make those details easy to isolate, compare, and extract. They match the format of the page to the decision the user is trying to make at that stage of the buying journey.

For teams, the takeaway is simple. Treat these as baseline structural requirements. Before refining a page for category exploration, comparison, or validation, make sure the content uses clear lists and short, easy-to-extract sentences.

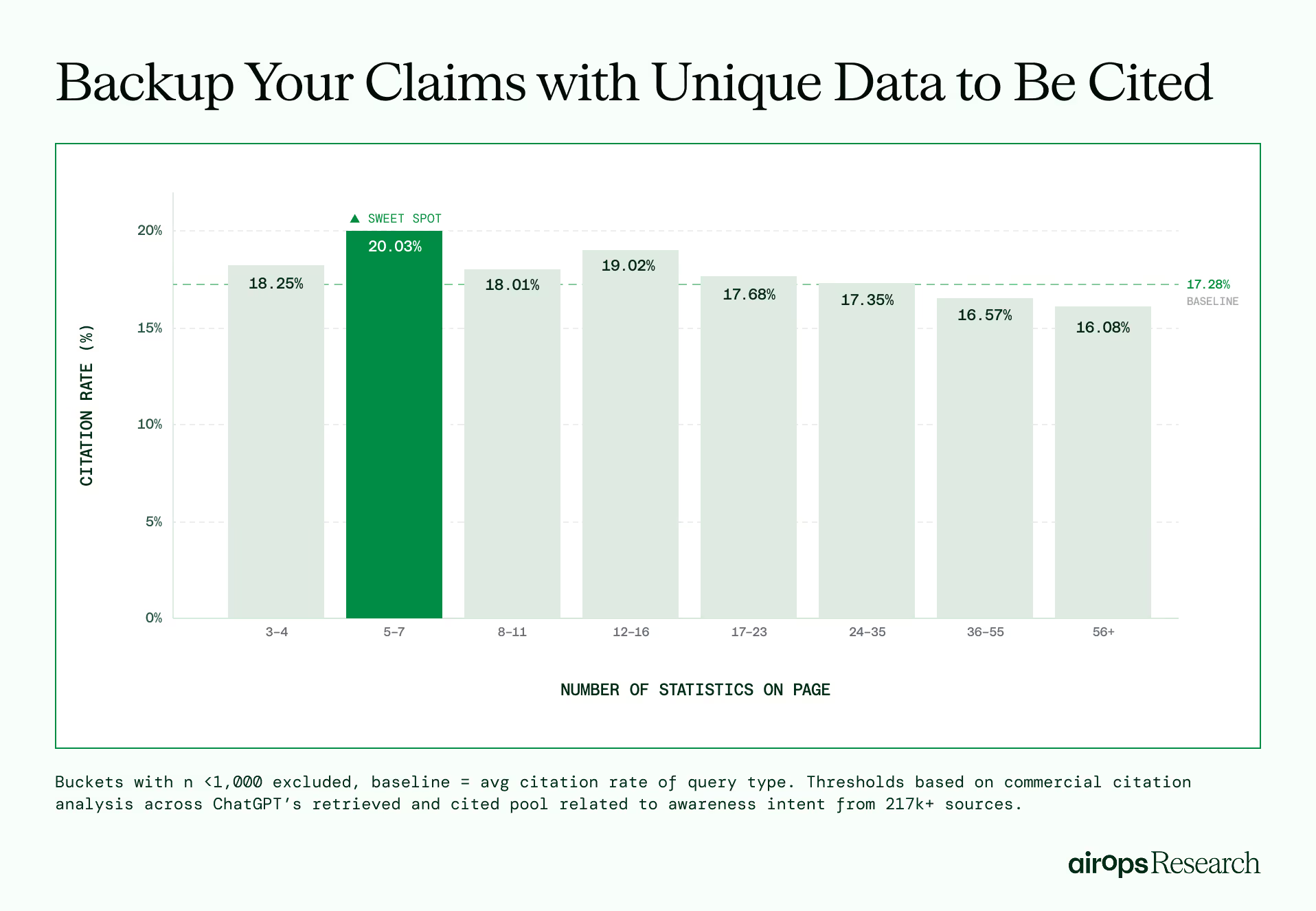

Early-Discovery Content Earns a 20% Higher Citation Likelihood When Claims Are Grounded in Data

Users in the awareness stage are trying to understand what kinds of solutions exist for their problem, not compare specific products. At this point, ChatGPT is helping define the category, surface the main solution types, and show the user where to look next.

The pages that earn citations at this stage explain the solution space clearly without becoming overly comprehensive.

- Content that includes 5 to 7 statistics to support claims, such as revenue impact or efficiency gains, has a 20.3% higher likelihood of earning a citation.

- Content that is clear, concise, and stays between 1,301-1,500 words has a 10.8% higher citation likelihood.

At this stage, LLMs are helping users understand the category and where to look next, so the pages that earn citations explain the solution space clearly and include enough evidence to support the path forward.

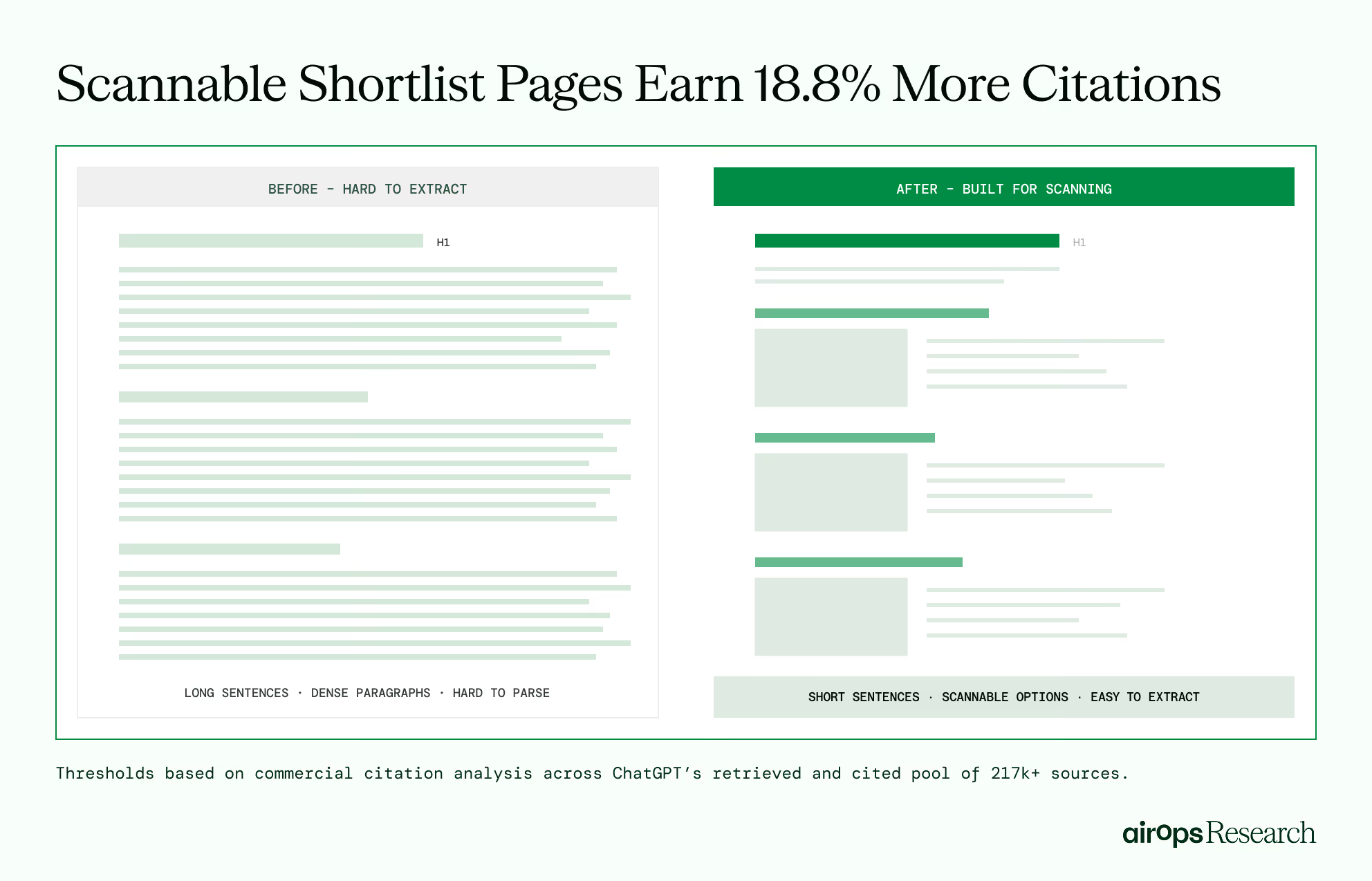

Pages That Make Shortlisting Options Scannable Earn 18.8% More Citations

When a user moves from awareness to active research, the query signals that AI search needs to help narrow options into a shortlist. Pages like "best project management tools" or "top CRM platforms for startups" need to make each recommendation scannable and individually assessable.

Pages with sentences averaging 10 words or less earn 18.8% more citations.

Shorter sentences make it easier for readers and AI search to understand differences between options, weigh tradeoffs, and identify the criteria that best satisfies the search intent..

Visual support and tighter page length reinforce that same pattern.

Pages with 10 images earn 16.4% more citations, and pages between 1,501 and 1,800 words earn 8.4% more citations.

When users are shortlisting options, images help separate recommendations, show how products differ, and give both the reader and the model clearer cues about what each option offers.

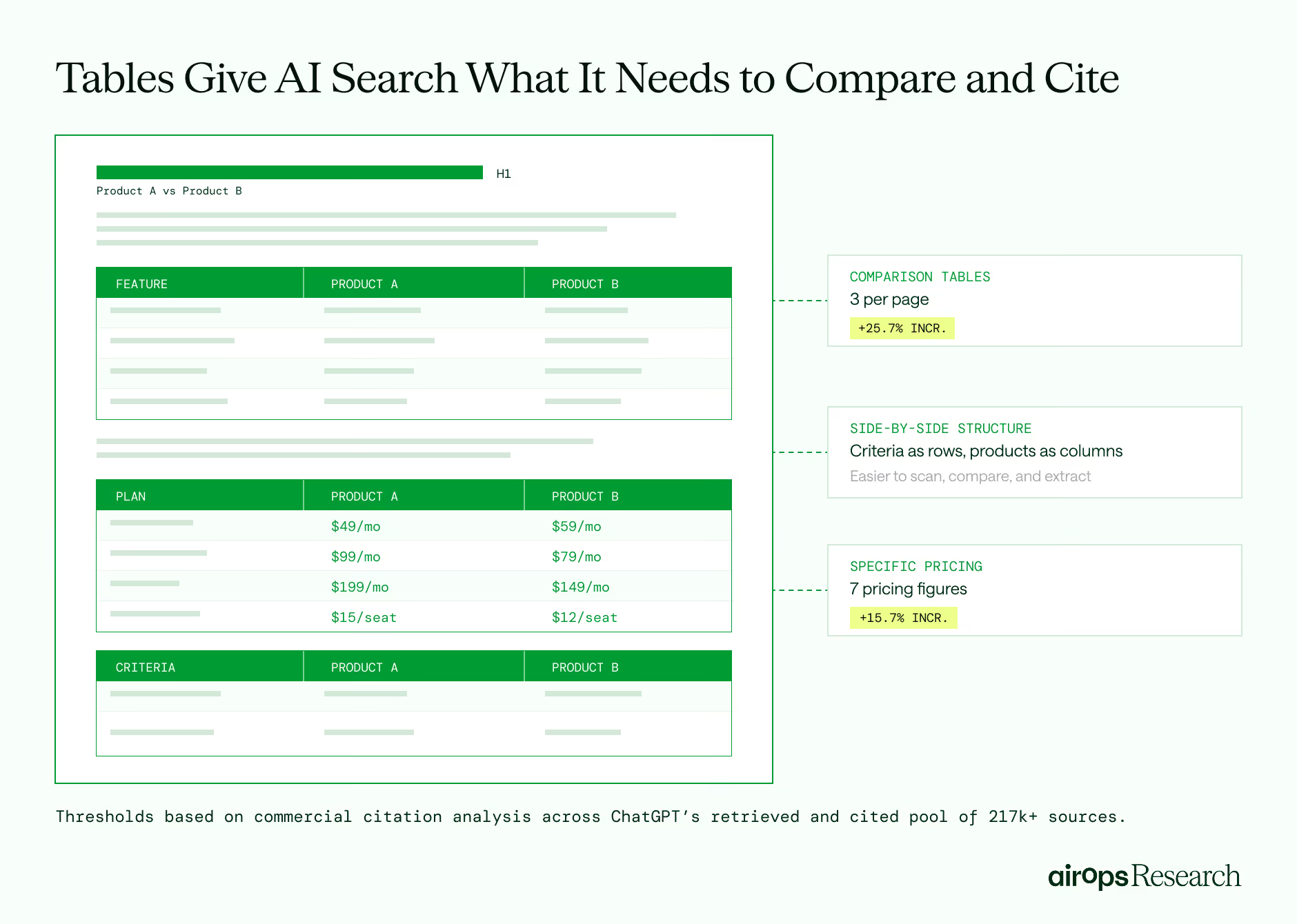

Comparison Content With Tables Earns 25.7% More Citations

Queries that compare specific products side by side, such as “HubSpot vs. Salesforce,” come later in the buying journey, when AI search needs to help the reader evaluate one named option against another across the details that shape a decision.

Pages with 3 tables earn 25.7% more citations. Tables give AI search a cleaner format for side-by-side evaluation, with criteria separated across rows and each product organized across columns.

Pages with 7 distinct price points earn 15.7% more citations, giving AI search enough pricing detail to compare plan tiers, per-seat costs, and monthly versus annual pricing directly.

For brands, comparison content should be built for side-by-side evaluation, not broad description. Use tables to organize features, pricing, and tradeoffs in one place, and include specific price points, plan tiers, and pricing details AI search can compare directly.

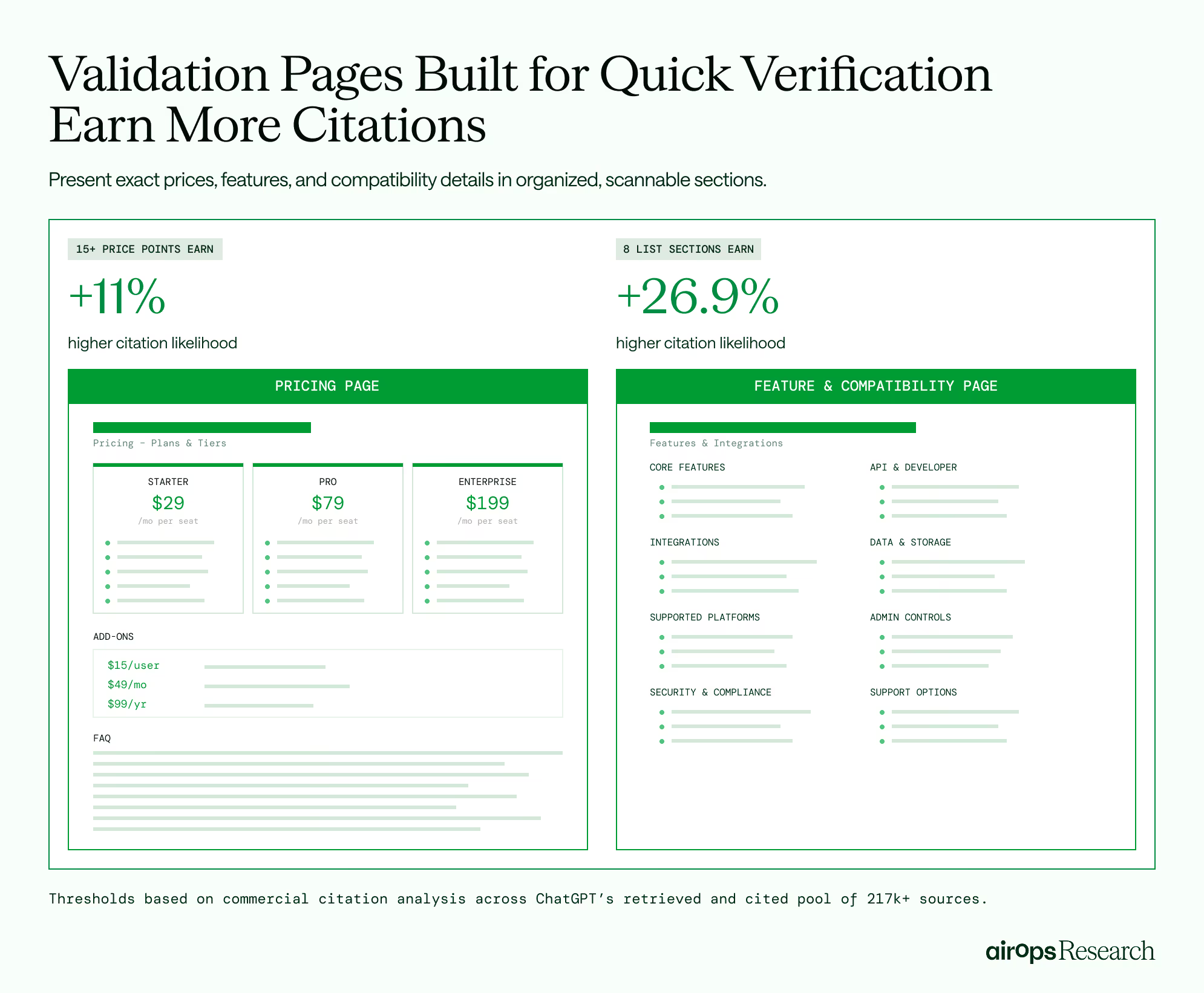

Validation Pages With Organized Lists Earn Up to 27% More Citations

Queries related to validation signal that AI search does not need a broad explanation or a shortlist. It needs content that can confirm one specific detail, such as pricing, compatibility, or whether a feature exists before the user makes a decision.

That pattern shows up in two signals:

- Content with 8 list sections earns up to 26.9% more citations.

- Content that includes 15+ price points has an 11.0% higher likelihood of earning a citation.

For brands, pages like pricing pages, feature lists, and compatibility documentation should be built for quick verification.

That means presenting exact prices, per-seat costs, tier breakdowns, features, integrations, and compatibility details in an easy-to-scan format, and keeping those details up to date so AI search can rely on the page as a trusted source.

What This Means for Content and SEO Teams

The buying journey should shape how commercial pages are built, not just what topics they cover.

Pages that earn citations in AI search do more than target the right stage, they present information in the format that makes it easiest for AI search to identify, extract, and use at that point in the decision process.

1. Adjust writing style by buying stage

The same writing style will not work equally well across every commercial page type. Shortlist pages performed best when sentences averaged 10 words or less, and comparison and validation pages also benefited from clear, direct language. Review sentence length on these pages and simplify sections that bury key details in long paragraphs.

2. Support awareness content with real evidence

Early-stage commercial pages with 5 to 7 statistics earned a 20% higher likelihood of being cited. If a page introduces a category, solution type, or approach, support the core claims with quantitative impact. Focus on statistics that help explain the market, clarify tradeoffs, or show why the category matters.

3. Audit comparison pages first

Comparison pages showed the strongest lift in this study. Pages with 3 tables had a 25.7% higher citation likelihood. Start by reviewing your versus pages and competitor comparisons. If they rely mostly on prose, add structured tables that clearly lay out pricing, features, limitations, and tradeoffs.

4. Treat validation pages as a core citation surface

Pricing pages, feature lists, and compatibility documentation with organized list sections earned up to 26.9% more citations. Review the pages buyers use to confirm final details before they convert. Make sure prices, integrations, features, and compatibility details are easy to scan, specific, and current.

Curious how well your content is optimized across the signals that matter? Take our free content assessment and get prioritized insights across discoverability, structure, readability, and accuracy, so you know exactly where to start.

As AI search continues to grow as a primary channel for discovery, the gap between retrieved content and cited content will become harder to ignore. In commercial search, format matters. Teams should review commercial pages based on the decision they support, then make sure the content is written and structured in a way that helps AI engines find, extract, and satisfy the users search intent.

Ready to structure your commercial content for AI Search?

Book a strategy session to learn how AirOps helps brands build pages that earn citations at every stage of the buying journey.