Search behavior is changing.

In AI search, people ask longer, more specific questions that sound more like real decision-making than traditional keyword searches.

AirOps recent research on the influence of retrieval, fan-out behavior, and SERPs found that ChatGPT does not stop at the original prompt. It expands searches through query fan-out, evaluates supporting questions, and often discovers cited pages through those follow-up paths rather than the starting query alone.

That changes how visibility is won.

Winning AI search is no longer just about ranking for a head term. It is about showing up across the broader set of questions, comparisons, and supporting topics the model uses to build an answer.

AI Search Changed How Users Find Information

Traditional search was built around short, compressed keywords. AI search looks different. Prompts are longer, more detailed, and often include the context, use case, or decision criteria that used to remain unspoken.

That matters because the prompt is no longer just a topic signal. It is often a bundled expression of intent. A user is not only asking what something is. They are also asking which option fits their situation, what tradeoffs matter, and what they should do next.

For brands, that changes what content needs to do. It is no longer enough to target a primary term and lightly cover related ideas. Content needs to align with the real questions, constraints, and comparisons that show up inside AI prompts.

AI search increases the value of content that covers the surrounding decision space, not just the category term.

The Long Tail is the New Competitive Surface

AI search shifted the competitive surface away from the head and deeper into the long tail. The prompts that shape retrieval and citation are often longer, more specific, and more contextual than the queries most teams are used to targeting.

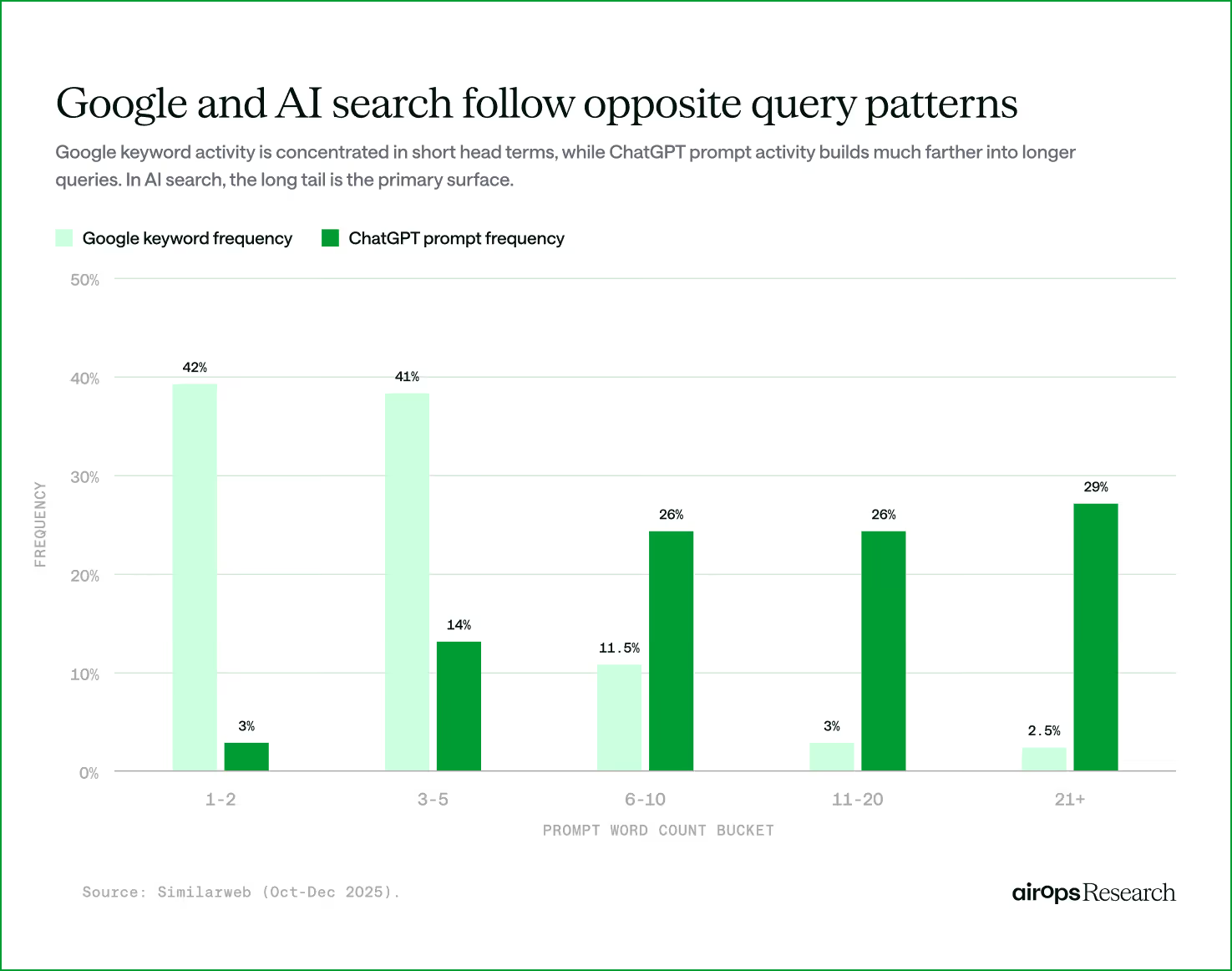

Google and AI Follow Different Search Patterns

Google search is still concentrated around short head terms, where users tend to search in short, compressed keyword phrases. That pattern shaped how people learned to search and how marketers learned to measure demand.

How people use AI to seek and find information follows a different pattern.

People use AI search more like a conversation, which means prompts are often longer, more detailed, and more reflective of the real question behind the search.

A study from Seer Interactive found that 95% of Gemini’s fan-out queries had zero monthly search volume by traditional metrics. This means many of the questions that shape visibility in AI search sit outside the head terms brands are used to targeting.

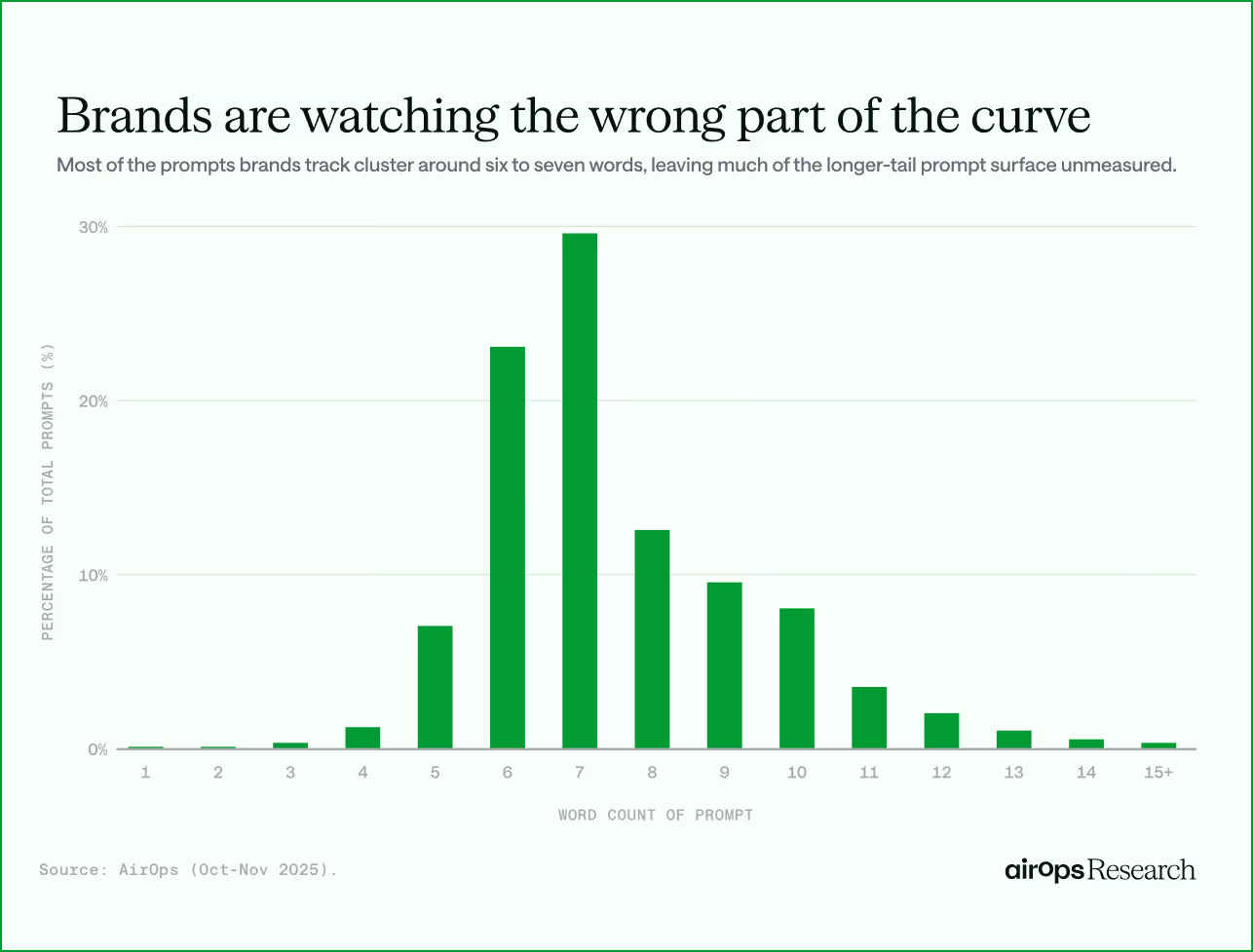

Marketers Are Still Measuring the Wrong Part of the Curve

Even teams that have started tracking AI prompts are still biased toward the head. The prompts they monitor are often shorter and more keyword-like than what people actually ask in AI search.

AirOps analyzed 245K+ prompts customers were tracking and found that the prompts teams monitored peaked around 6 to 7 words, with very little coverage beyond 10. But the prompts people use in ChatGPT peak much farther into the long tail, where prompts are longer and more detailed.

As a result, brands may think they have visibility coverage while missing many of the prompts most likely to shape retrieval and citation. Teams that focus only on short, familiar queries will miss much of the surface area where AI visibility is actually won or lost.

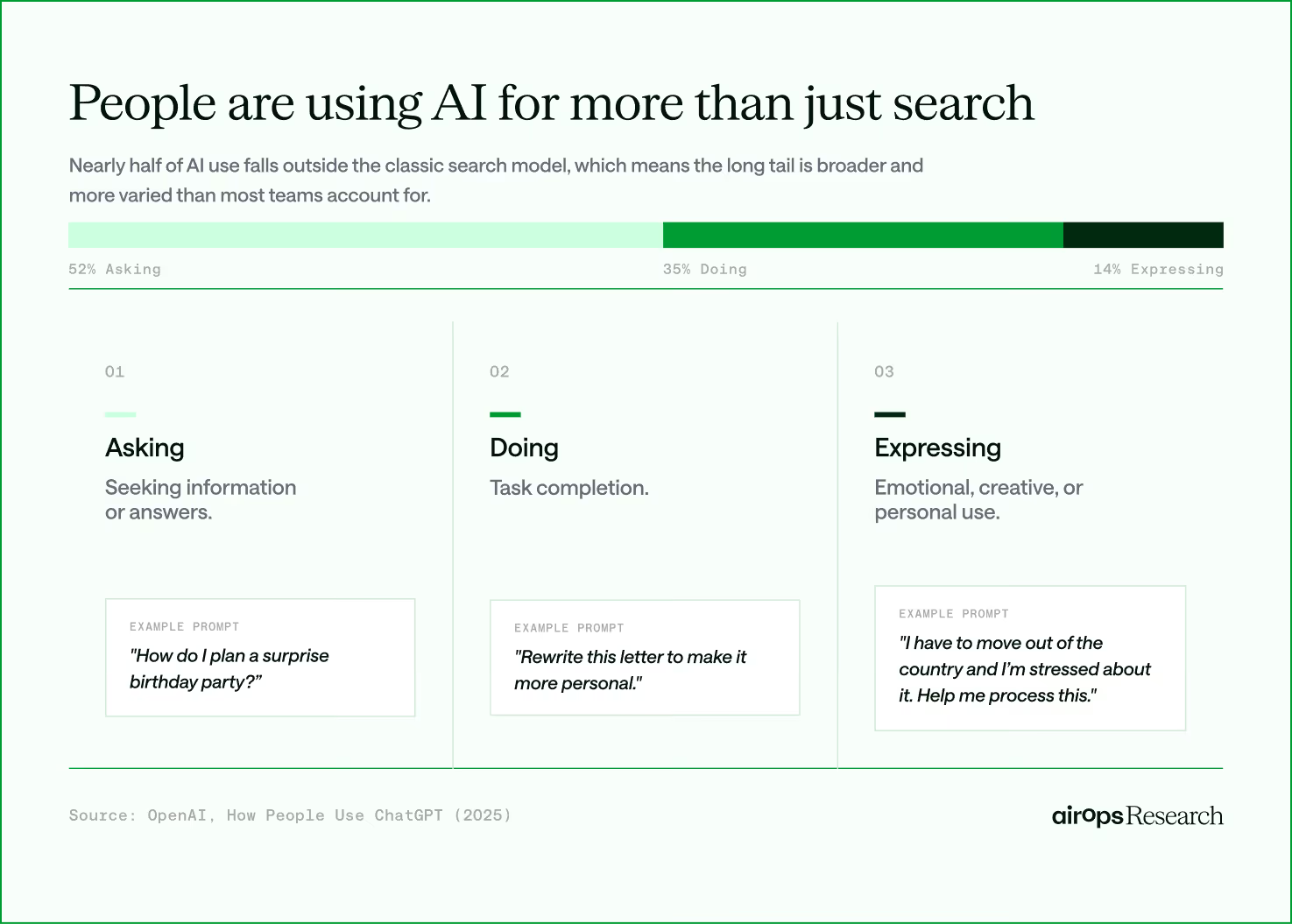

The Way People Use AI Search Is Broader Than Search Alone

The long tail is not just about longer prompts. It also reflects a broader mix of user intent, with people using AI search in ways that extend beyond traditional search behavior.

OpenAI Research found that monthly AI sessions break down into 52% Asking, 35% Doing, and 14% Expressing. In other words, nearly half of the ways people use AI search fall outside the classic search model. The long tail is not only bigger than most teams think, it is also more varied.

That changes what visibility strategy needs to account for.

People use AI search not only to ask questions, but also to complete tasks, refine work, and navigate specific situations. Brands that create content only for classic search-like questions will miss much of the context AI systems use when they retrieve, compare, and assemble answers.

How AI Search Discovers and Selects Sources

The long tail shapes demand. It’s also shaped by how AI models find and choose sources. Two mechanics under the hood matter most.

Where Citations Actually Come From

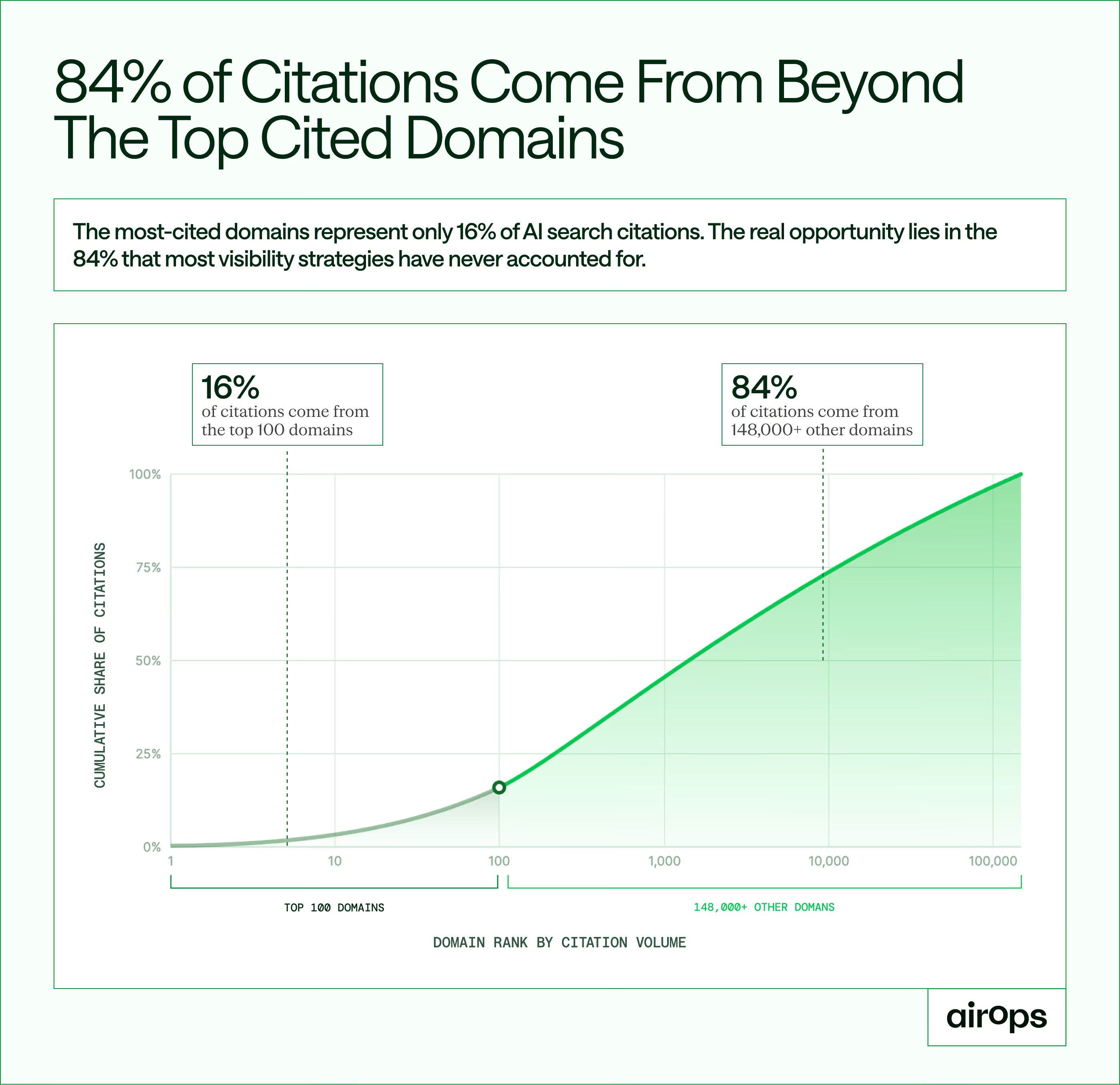

In 89.6% of searches, ChatGPT expanded the original prompt into two or more fan-out queries before deciding what to cite. That wider path connects long-tail prompts to long-tail content:

- 32.9% of cited pages were discovered only through fan-out, not the original query.

- 84% of all citations come from beyond the top 100 domains, the remaining 148K+ domains make up the bulk. There is no "page one" to win.

Not Everything Retrieved Gets Referenced

Only 15% of pages ChatGPT retrieves during answer generation are actually cited. The model pulls many candidates, then selects the ones that best match the user intent and queries the model is sourcing to generate an answer:

- Pages with 50%+ title-query overlap were cited at 20.1% vs. 9.3% for pages below 10% overlap.

- 32.9% of cited pages appeared only in SERPs for a fan-out query rather than the starting prompt.

Long-tail demand creates long-tail citation opportunities. The discovery mechanism actively searches for specificity, and the selection mechanism rewards it. That is why the next step is finding the right questions and creating content that answers them.

Finding and Creating Long-tail Content

If the long tail is where AI visibility is won, brands need a reliable way to find the questions that matter and create content that answers them. This is not just a content production problem. It is a discovery problem first.

Where to Find Long-tail Prompt Intelligence

Most of the long tail will not show up in keyword tools, meaning many of the questions shaping retrieval and citation sit outside the systems most teams still use for content planning.

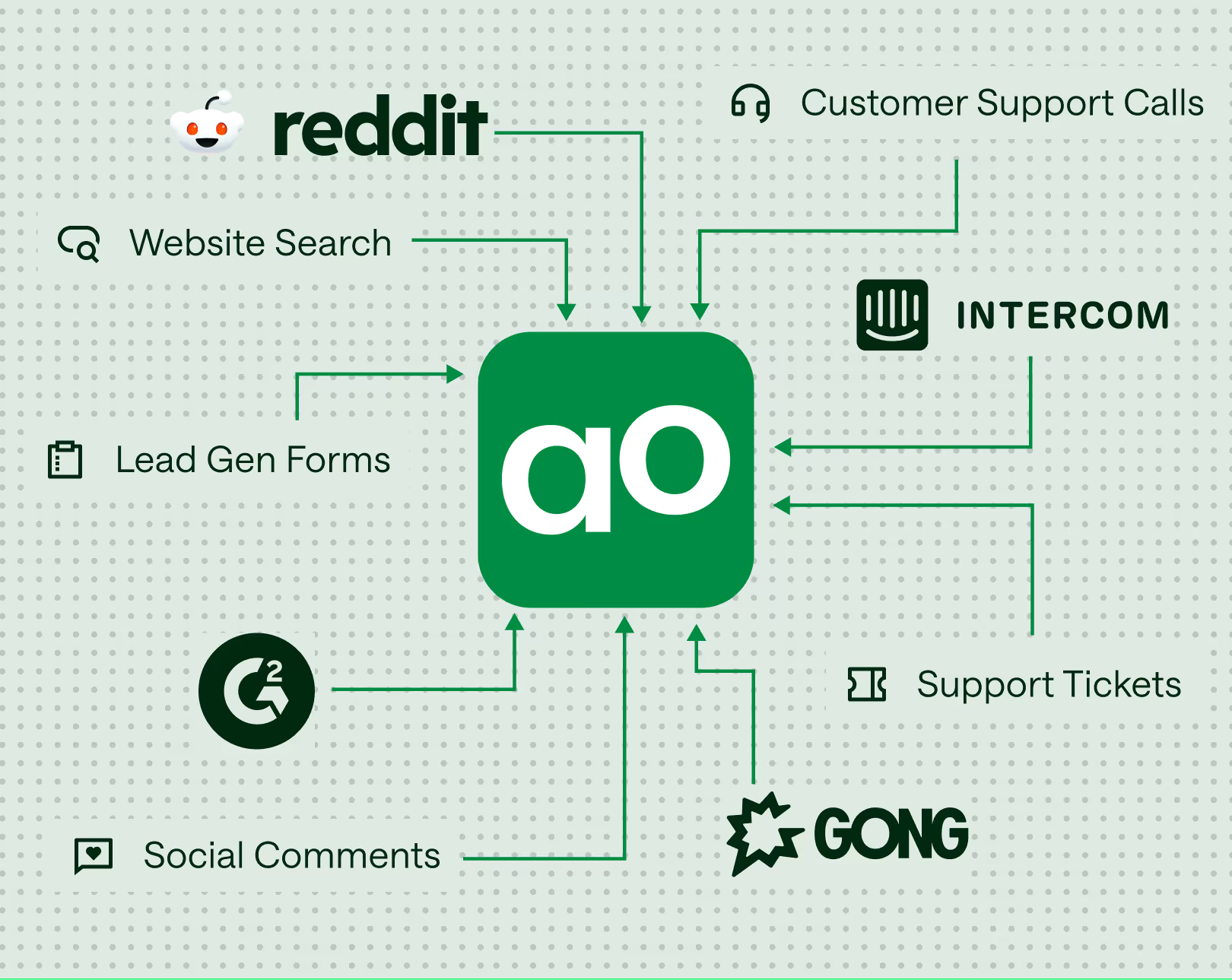

The better signal is often much closer to the customer. Communities, call transcripts, help desks, and user interviews capture the questions people already ask in their own words.

This changes where teams should look for demand. Instead of collecting more keywords, teams need a repeatable way to identify the real questions buyers ask across the decision journey. Those questions often surface parts of the long tail that traditional tools miss.

What Long-tail Content Needs to Look Like

Finding the right questions is only the first step. The content also needs to answer those questions clearly enough to be retrieved, extracted, and selected.

AirOps Research found that pages with 50%+ title-query overlap had a 20.1% citation rate, compared with 9.3% for pages with less than 10% overlap. That means content that closely matches the specific question is more likely to be cited than content built around a broad category term.

For marketers, that points to a simple sequence:

- Build each page around a specific long-tail question, not a broad category term.

- Put the answer in plain text near the top so models can easily find and extract it.

- Add something generic pages cannot offer: original data, a worked example, or a concrete recommendation.

A page does not need to rank for a broad head term to be valuable. It needs to be retrievable, clearly structured, and directly relevant to the longer-tail questions people are actually asking.

Winning in AI Search Starts in the Long-tail

AI search moved the competitive surface from head terms to the long tail. Most brands are still tracking shorter, keyword-like prompts while the queries that shape citations sit much farther out.

The playbook is straightforward: mine the questions buyers already ask, create content that is findable, extractable, and worth citing, and track visibility across the full prompt surface.

The brands that do this first will own the long-tail positions their competitors do not yet know exist.

Learn how AirOps helps teams track and improve visibility across AI search, from long-tail prompts to fan-out queries to the sources models retrieve and cite.

Ready to see where your brand stands in AI Search?

Book a strategy session to learn how AirOps helps brands measure, grow, and win AI search.

Sources: AirOps Research, The Influence of Retrieval, Fan-out Behavior, and SERPs; AirOps internal analysis of 245K+ tracked prompts; Seer Interactive research on Gemini fan-out queries; OpenAI Research, How People Use ChatGPT (2025); AEO Conf 2026 presentation insights from Alex Halliday (AirOps) and Ethan Smith (Graphite).