AirOps vs Adobe LLM Optimizer: Which Platform Helps Teams Ship AI-Ready Content?

- Adobe LLM Optimizer tracks brand visibility across AI platforms and can deploy edge-layer adjustments for agent-facing traffic without changing CMS content

- AirOps connects AI search visibility signals with SEO and engagement data, then turns those insights into refresh and creation programs that publish directly to your CMS

- Adobe requires CDN log forwarding to populate the Agentic Traffic dashboard and surface agent-level crawl diagnostics

- Adobe licensing centers on tracked prompt volume, with a minimum purchase of 1,000 prompts

- AirOps supports teams that need to refresh large content libraries, create net-new pages, and connect AI visibility improvements to measurable performance signals

If you track how your brand shows up in AI answers, you already know the frustrating part: visibility signals move fast, but content updates still move at human speed.

That mismatch creates a practical question for SEO and content leaders: Do you need a platform that improves how AI agents interpret what you already published, or do you need a platform that helps your team create and refresh source content at scale?

AirOps and Adobe LLM Optimizer both speak to AI search visibility, but they solve different constraints. This comparison focuses on day-to-day use: what each platform changes, where updates live, how teams act on recommendations, and how quickly you can ship.

AirOps vs Adobe LLM Optimizer at a glance

Before diving deeper, the table below shows how AirOps and Adobe LLM Optimizer compare across the areas most teams evaluate when choosing an AI visibility platform.

The table highlights the core difference: Adobe focuses on AI visibility monitoring and agent-facing optimizations, while AirOps connects visibility insights to content programs teams can actually ship.

AirOps vs Adobe LLM Optimizer: platform overview

AirOps and Adobe LLM Optimizer approach AI search visibility from different angles.

Adobe LLM Optimizer sits between your website and AI agents. It injects technical fixes at the CDN layer so bots see cleaner versions of your pages. The product fits enterprises already running Adobe Experience Cloud that want to protect visibility without changing their CMS.

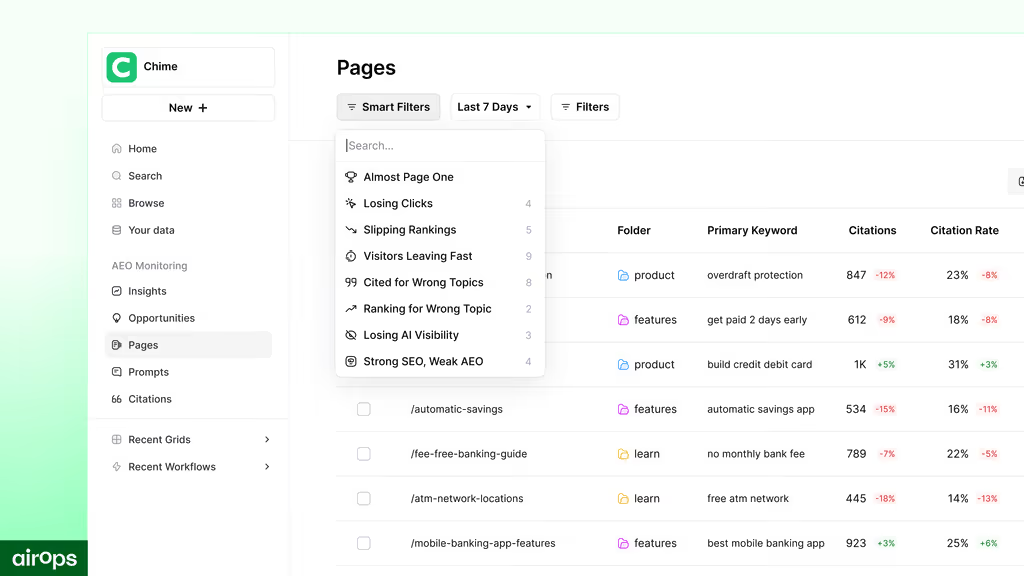

AirOps treats AI visibility as a content system problem. It connects AI citation data with SEO rankings and engagement metrics, then helps teams ship the fixes. Content and growth teams use Page360 to measure, the Opportunities Engine to prioritize, and the Grid and Workflows to execute.

Adobe adjusts how agents interpret existing pages. AirOps improves the pages themselves so both humans and AI systems see stronger source content that can earn citations.

Core capabilities: how the products differ in real work

Measurement: what you can see, and what you can explain

Adobe provides AI search reporting and agentic traffic diagnostics. The Agentic Traffic dashboard depends on CDN log forwarding, and Adobe documentation notes that without it the dashboard stays empty. The data shows which agents access which URLs and how those patterns change.

AirOps focuses measurement on page-level signals that help teams decide what to update next. It combines AI search visibility with traditional SEO and engagement metrics in a URL-level view. That connection helps teams see which pages earn citations, where visibility shifts, and what work should happen next.

AirOps also benchmarks competitor visibility across AI search platforms. That helps teams identify citation gaps, spot emerging topics earlier, and prioritize work that can improve share of voice.

Executives rarely fund “AI visibility” as a standalone metric. They fund outcomes. A unified view makes it easier to connect citation movement to measurable performance.

Recommendations: what the platform suggests you do next

Adobe surfaces opportunities designed to improve how AI agents interpret existing pages. Many recommendations focus on structure and readability, since Optimize at Edge delivers adjustments to agentic traffic rather than updating the CMS itself.

AirOps organizes recommendations around work teams can publish. The platform highlights pages to refresh, topics that need new pages, and supporting updates that increase citation likelihood over time.

In practice, the distinction is simple. Adobe focuses on improving the interpretation of existing pages. AirOps focuses on expanding and maintaining a content library that consistently earns citations.

Execution: how updates ship, and where they live

Execution reveals the clearest difference between the platforms.

Adobe can deploy certain improvements at the edge for agentic traffic. Adobe documentation explains that Optimize at Edge delivers simplified content versions to agentic traffic. That helps LLMs interpret and summarize pages more accurately. Teams can still act even when CMS changes take time.

AirOps runs execution inside content programs. Teams apply repeatable processes across many pages, route outputs through review, and publish updates back to the CMS so improvements stay permanent.

In practice this means:

- Adobe helps when the bottleneck sits in CMS governance and release cycles

- AirOps helps when the bottleneck sits in writing, refreshing, and publishing capacity

Governance: how each platform keeps brand content consistent

Adobe emphasizes enterprise governance and brand visibility protection. In the Optimize at Edge model, governance focuses on controlling agent-facing adjustments, so inserted changes don't introduce risk.

AirOps places governance directly inside execution. Brand Kits store voice, tone, product context, and audience guidelines so every run follows the same rules. Knowledge Bases ground outputs in internal sources rather than generic model training.

For teams managing large content libraries, governance determines whether scale stays consistent or becomes difficult to manage.

Data and setup: what each platform needs from your org

Adobe: deeper diagnostics but heavier setup

Adobe uses CDN log forwarding to power agentic traffic analysis. The requirement appears directly in Adobe documentation. Setup often involves coordination across marketing, web ops, and IT.

If your organization already runs Adobe Experience Cloud, this process will likely feel routine. If not, the dependency can slow the time to first insight.

AirOps: faster setup for content teams

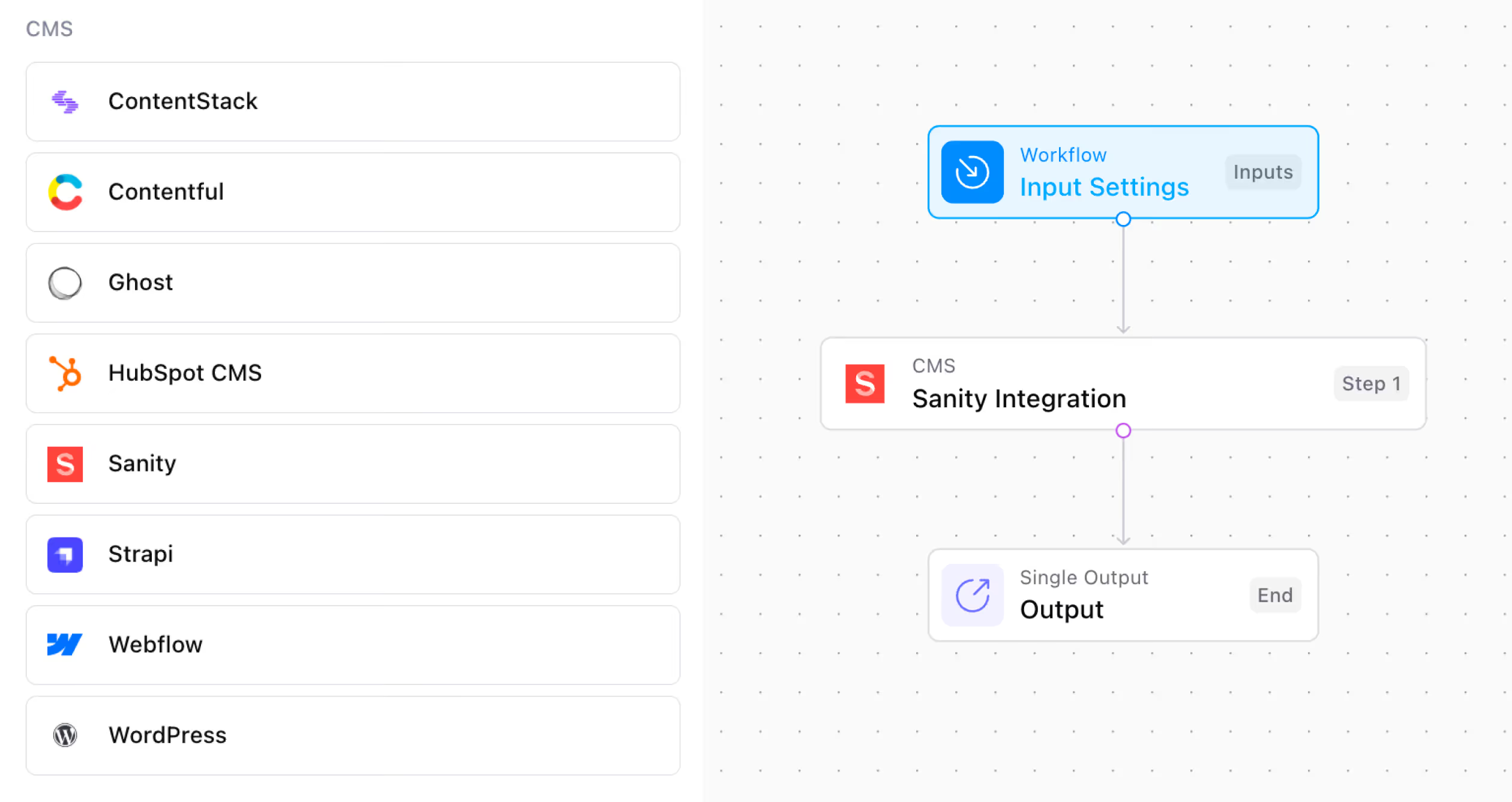

AirOps connects to common SEO tools, analytics systems, and CMS publishing paths through APIs. That setup tends to work well for lean content teams that want measurable progress this quarter.

Integrations: ecosystem fit matters more than feature lists

Adobe fits best in Adobe-standardized environments, especially where Adobe products already run content, analytics, and governance.

AirOps fits teams that run a mixed stack and need content execution within it. The platform focuses on connecting measurement signals to publishing so teams can ship without tool switching.

Pricing and value: how the model shapes behavior

Adobe licensing typically centers on tracked prompts and enterprise agreements. Adobe documentation references a minimum purchase of 1,000 prompts, with additional increments available as usage scales. Adobe also offers a limited “Try Before You Buy” option for some AEM customers with up to 200 prompts before a full license is required.

AirOps typically scales with execution volume (tasks). Teams can start on a free tier and expand as they operationalize more programs.

Why the model matters

- Prompt-based licensing can push teams to treat monitoring scope as a scarce resource, especially when multiple functions want access to the same visibility questions.

- Execution-based scaling usually aligns spend to shipped work, since spend rises when output rises.

When each platform makes sense

Choose Adobe LLM Optimizer when release cycles block action

Adobe fits when your organization can't move the CMS quickly and needs an agent-facing path to improvement.

Choose Adobe when:

- Your org standardizes on Adobe Experience Cloud tooling.

- Your team needs agentic traffic diagnostics that depend on CDN logs.

- Your team wants to deploy edge optimizations for agentic traffic without CMS authoring changes.

Choose AirOps when execution capacity blocks action

AirOps fits when your team can see what needs to change, but you can't refresh and create content fast enough.

Choose AirOps when:

- Your team must refresh hundreds of aging pages in a quarter.

- Your team must create net-new pages to cover topics competitors already own.

- Your team needs durable improvements in the CMS, not only agent-facing variants.

- Your team wants one system that connects measurement, prioritization, review, and publishing.

Strengths and limitations

AirOps

Strengths

- Connects AI search visibility signals with SEO and engagement signals in a URL-level view.

- Supports refresh and creation programs at scale through the Grid and repeatable runs.

- Publishes changes back to the CMS so content becomes a durable asset.

Limitations

- Custom automation requires upfront learning, especially for teams that want to build unique multi-step processes.

- Does not focus on CDN log-based agent request analysis as a primary experience.

Adobe LLM Optimizer

Strengths

- Provides agentic traffic reporting when teams complete CDN log forwarding.

- Supports Optimize at Edge, which can help teams act without CMS authoring changes.

- Fits enterprises already aligned to Adobe Experience Cloud.

Limitations

- Keeps improvements primarily in an agent-facing layer, which can split what agents receive from what humans see, depending on deployment choices.

- Limits execution to the edge optimization model rather than full content creation programs.

- Requires technical setup for agentic traffic reporting and related dashboards.

The real decision: monitoring visibility or shipping improvements

Start with the constraint your team faces most often.

If infrastructure and slow publishing cycles block progress, Adobe LLM Optimizer can help teams deliver agent-facing improvements at the edge without waiting on CMS updates. This approach fits organizations already embedded in Adobe Experience Cloud or operating within strict release processes.

If execution is the constraint, such as refreshing aging pages, filling topic gaps, and turning visibility signals into published updates, a content engineering approach makes more sense. AirOps connects AI search insights to repeatable creation and refresh programs that publish directly to the CMS.

Book a demo to see how AirOps helps teams turn AI search insights into published content that earns more citations and sustained visibility.

FAQs

What is the main difference between AirOps and Adobe LLM Optimizer?

AirOps helps teams improve the source content in their CMS so both humans and AI systems see the same updated content. Adobe LLM Optimizer focuses on monitoring AI visibility and delivering agent-facing optimizations at the edge.

Do teams need IT involvement to use Adobe LLM Optimizer?

Yes. Teams need CDN log forwarding to populate the Agentic Traffic dashboard, which Adobe documentation calls out directly. That setup usually requires coordination with web ops or IT.

How does Adobe licensing work?

Adobe licensing typically centers on tracked prompts. Packages start with a minimum of 1,000 prompts, with additional prompt volumes available as usage grows. Some AEM customers can access a limited trial before purchasing a full license.

Can Adobe LLM Optimizer create net-new content?

No. Adobe LLM Optimizer focuses on monitoring AI visibility and recommending improvements. It can deploy certain optimizations at the edge for agentic traffic, but teams usually need a separate system to run large-scale content creation or refresh programs.

Get the latest on AI content & marketing

Get the latest in growth and AI workflows delivered to your inbox each week

.avif)